Generative AI has emerged as the focus of this year’s KubeCon + CloudNativeCon. In most cloud computing conferences this year, GenAI has dominated keynote presentations and shed light on the evolution of cloud-native platforms.

Most companies who took the stage at KubeCon announced that they already leverage cloud-native platforms to support generative AI applications and large language models (LLMs). The tone was “us too!” more than a solid explanation of the strategic advantages of their approach. You can sense an air of desperation, especially from companies that poo-pooed cloud computing in the beginning but had to play catch-up later. They don’t want to do that again.

What’s new in the cloud-native world?

First, cloud-native architectures are essential to cloud computing, but not because they provide quick value. It’s a path to building and deploying applications that function in optimized ways on cloud platforms. Cloud-native uses containers and container orchestration as the base platforms but has a variety of features that are part standard and non-standard components.

What needs to change in cloud-native to support generative AI? Unique challenges exist with generative AI, as was pointed out at the event. Unlike traditional AI model training where smaller GPUs may suffice for inference, LLMs require high-powered GPUs at all stages, even during inference.

The demand for GPUs is certainly going to explode, exacerbated by challenges regarding availability and environmental sustainability. This will take more power and bigger iron, for sure. That’s not a win in the sustainability column, but perhaps it needs to be accepted to make generative AI work.

As I pointed out last week, the resources required to power these AI beasties are much greater than for traditional systems. That’s always been the downside of AI, and it’s still a clear trade-off that enterprises need to consider.

Will cloud-native architecture and development approaches make this less of an issue? Efficient GPU use has become a priority for Kubernetes. Intel and Nvidia announced compatibility with Kubernetes 1.26 in support of dynamic resource allocation. The advantage of using 1.26 is the ability to enhance the allocation of workloads to GPUs. This addresses shared cloud infrastructure resources, which should reduce the amount of processor resources needed.

Lab testing will be interesting. We’ve seen architectural features like this that have been less than efficient and others that work very well. We will have to figure this out quickly if generative AI is to return business value without a significant amount of money needed. Also, better-optimized power consumption will impact sustainability less than we’d like to see.

Open source to the rescue?

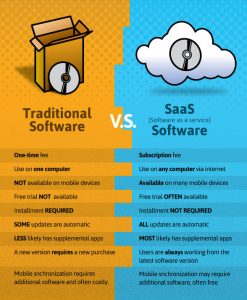

Enterprises need to consider the value of open source solutions, such as the ones that are a part of cloud-native or that can provide a path to value for generative AI. Open source solutions versus those from specific technology providers offer a range of cost and risk trade-offs that enterprises need to consider.

Open source can be a religion for some businesses; some companies only use open source solutions, whereas others avoid them altogether. Both groups have good reasons, but I can’t help but think that the most optimized solution is somewhere in the middle. We must start our generative AI journey with an open mind and look to all technologies as potential solutions.

We’re at the point where the decisions we make now will affect our productivity and value in the next five years. Most likely it will be very much like the calls we made in the early days of cloud computing, many of which turned out to be less than correct and caused a considerable ROI gap between expectations and reality. We’ll be fixing those mistakes for many years to come. Hopefully, we won’t have to repeat our cloud lessons with generative AI.

Copyright © 2023 IDG Communications, Inc.